Read the full article on KETV 7

There’s a push in the Nebraska Legislature to create safety guidelines for artificial intelligence. The Banking, Commerce, and Insurance Committee heard testimony for two bills Monday afternoon. Both of the bills would create regulations aimed at protecting minors. Artificial intelligence is a rapidly developing technology, and Nebraska state Sens. Eliot Bostar and Tanya Storer said it’s presenting a new set of risks that need to be addressed. Bostar introduced LB 1185. It would establish the Conversational Artificial Intelligence Safety Act.”First, the bill requires clear and conspicuous disclosure when a user is interacting to artificial intelligence,” Bostar said. “So no one, especially a minor, is misled into believing they are speaking with a real person.”Bostar said it also prohibits reward systems for continuous use and puts safeguards in place for minors to prevent any sexually explicit content or interactions. Bostar said the bill also draws a clear line around mental health. “It does prohibit operators from representing these systems as providers of professional mental or behavioral health care,” Bostar said. “When users express suicidal ideation or self-harm. Operators must have protocols in place to refer users to real crisis resources.”Several people testified in support of the bill; many said it’s a policy that protects people while still allowing technology to move forward. One testifier, a clinical psychologist, said the bill is necessary because children need to be protected. She said AI chatbots are not therapists. “They’re in trouble of thinking they’re getting help when they’re not, and talking to AI instead of an adult who is deeply invested in their well-being.”Storer’s bill, LB 1083, also focuses on the mental health of children by establishing the Transparency in Artificial Intelligence Risk Management Act. She said it holds large AI developers accountable. “It does not ban any technology. It does not impose specific technical requirements. There is no way of developing AI that it prohibits,” Storer said. “What it does is require the largest AI developers and chatbot providers to tell us how they are managing these risks, to write down their safety plans, publish those plans, and report safety incidents to the attorney general.”During Monday’s hearing, Storer shared multiple stories in which children died after relying on AI for help.”In 2025, a 16-year-old boy in California took his own life after receiving encouragement and detailed guidance from ChatGPT,” Storer said. Advocacy groups, like Mothers Against Media Addiction or MAMA, agree. Michele Neily, leader of the group and former law enforcement officer, said action is needed now before harms become even more widespread. “Two in three teens use AI chatbots, and 12% have turned to them for emotional or mental health support,” Neily said. No one spoke in opposition to either bill during Monday’s hearing. NAVIGATE: Home | Weather | Local News | National | Sports | Newscasts on demand |

There’s a push in the Nebraska Legislature to create safety guidelines for artificial intelligence. The Banking, Commerce, and Insurance Committee heard testimony for two bills Monday afternoon.

Both of the bills would create regulations aimed at protecting minors.

Advertisement

Artificial intelligence is a rapidly developing technology, and Nebraska state Sens. Eliot Bostar and Tanya Storer said it’s presenting a new set of risks that need to be addressed.

Bostar introduced LB 1185. It would establish the Conversational Artificial Intelligence Safety Act.

“First, the bill requires clear and conspicuous disclosure when a user is interacting to artificial intelligence,” Bostar said. “So no one, especially a minor, is misled into believing they are speaking with a real person.”

Bostar said it also prohibits reward systems for continuous use and puts safeguards in place for minors to prevent any sexually explicit content or interactions. Bostar said the bill also draws a clear line around mental health.

“It does prohibit operators from representing these systems as providers of professional mental or behavioral health care,” Bostar said. “When users express suicidal ideation or self-harm. Operators must have protocols in place to refer users to real crisis resources.”

Several people testified in support of the bill; many said it’s a policy that protects people while still allowing technology to move forward.

One testifier, a clinical psychologist, said the bill is necessary because children need to be protected. She said AI chatbots are not therapists.

“They’re in trouble of thinking they’re getting help when they’re not, and talking to AI instead of an adult who is deeply invested in their well-being.”

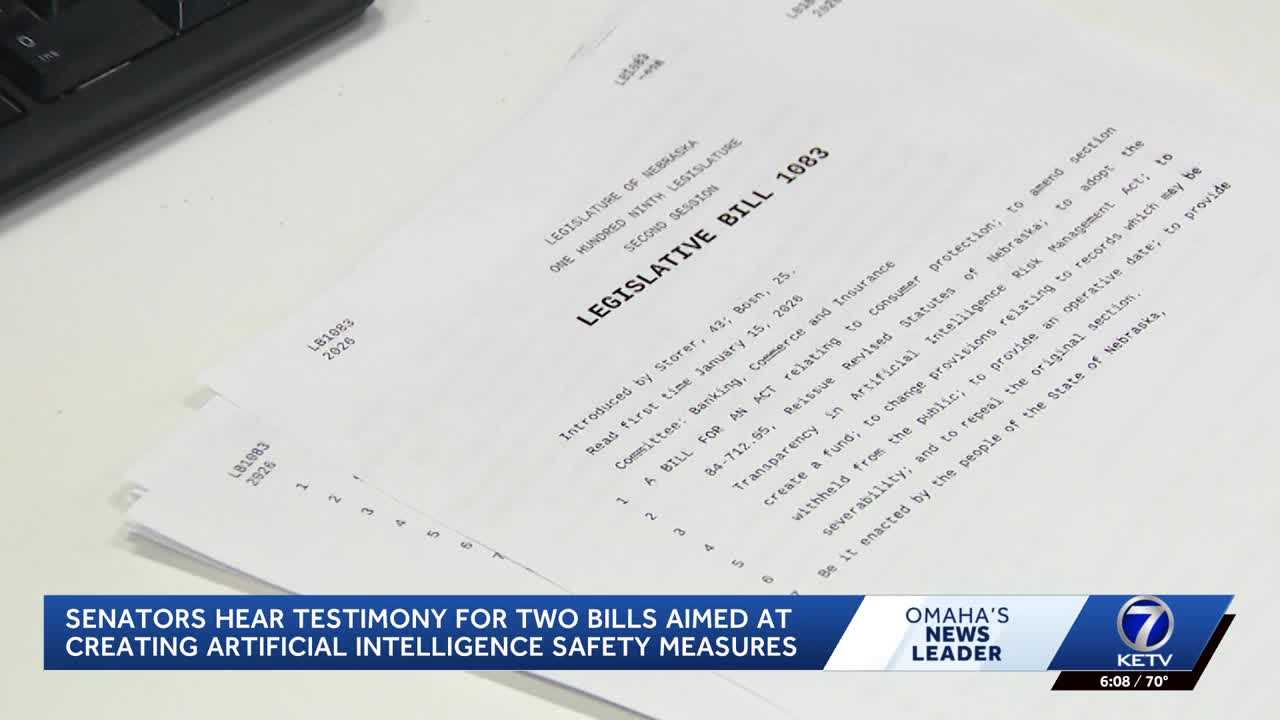

Storer’s bill, LB 1083, also focuses on the mental health of children by establishing the Transparency in Artificial Intelligence Risk Management Act. She said it holds large AI developers accountable.

“It does not ban any technology. It does not impose specific technical requirements. There is no way of developing AI that it prohibits,” Storer said. “What it does is require the largest AI developers and chatbot providers to tell us how they are managing these risks, to write down their safety plans, publish those plans, and report safety incidents to the attorney general.”

During Monday’s hearing, Storer shared multiple stories in which children died after relying on AI for help.

“In 2025, a 16-year-old boy in California took his own life after receiving encouragement and detailed guidance from ChatGPT,” Storer said.

Advocacy groups, like Mothers Against Media Addiction or MAMA, agree. Michele Neily, leader of the group and former law enforcement officer, said action is needed now before harms become even more widespread.

“Two in three teens use AI chatbots, and 12% have turned to them for emotional or mental health support,” Neily said.

No one spoke in opposition to either bill during Monday’s hearing.

NAVIGATE: Home | Weather | Local News | National | Sports | Newscasts on demand |